Autonomous Mobile Manipulation with Legged Robots

Legged robots have the potential to be superior to their wheeled counterparts when operating in unstructured environments or traversing rough terrains. Recent advances from Boston Dynamics, Ghost Robotics, ANYbotics, Unitree Robotics and other companies show that legged robots are getting better and better at moving around, and have even recently had some commercial success. However, legged robots are still mostly used as locomotion research platforms, and their limited commercial applications are restricted to inspection, security, and “last-meter” delivery, where interaction with the environment is not needed and rather avoided. Given the inherent ability of legged robots to use their limbs as general-purpose manipulators, my research seeks to demonstrate ways of accomplishing tasks with legged robots that require interaction with their surroundings, such as rearrangement planning, navigation among movable obstacles, or strategic rearrangement of the robot’s environment to escape a room.

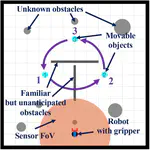

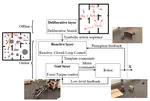

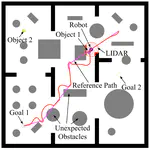

More specifically, in this project, we develop a modular, and task, environment and platform independent architecture (inherently unavailable in end-to-end deep learning schemes), with formal correctness conclusions based on some underlying assumptions about the environment, where an offline deliberative layer for task planning works closely with an online reactive module, that uses exteroception and handles environment uncertainties.

This reactive module communicates with a platform-specific gait layer, comprised of a set of simple dynamical primitives, that realizes the commands from the reactive layer in a way that is meaningful for the robot. Each of these independent layers comes with provable guarantees of optimality (for the deliberative layer), collision avoidance and convergence (for the reactive layer) or low-level performance, expressed as symbols of energy landscapes composed either in parallel or sequentially (for the gait layer), offering the chance of generalization across multiple mobile manipulators (legged or wheeled).

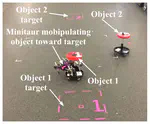

We believe this is the first provably correct deliberative/reactive planner to engage an unmodified general purpose mobile manipulator in physical rearrangements of its environment. We are able to accomplish a variety of tasks, including desired assemblies of objects with size comparable to the robot’s size among unanticipated conditions and obstacles, navigation among movable obstacles, and strategic escapes by exploiting and manipulating the robot’s environment. To this end, we develop the mobile manipulation maneuvers to accomplish each task at hand, successfully anchor the useful kinematic unicycle template to control the highly dynamic Minitaur robot and integrate perceptual feedback with low-level control to coordinate the robot’s movement.